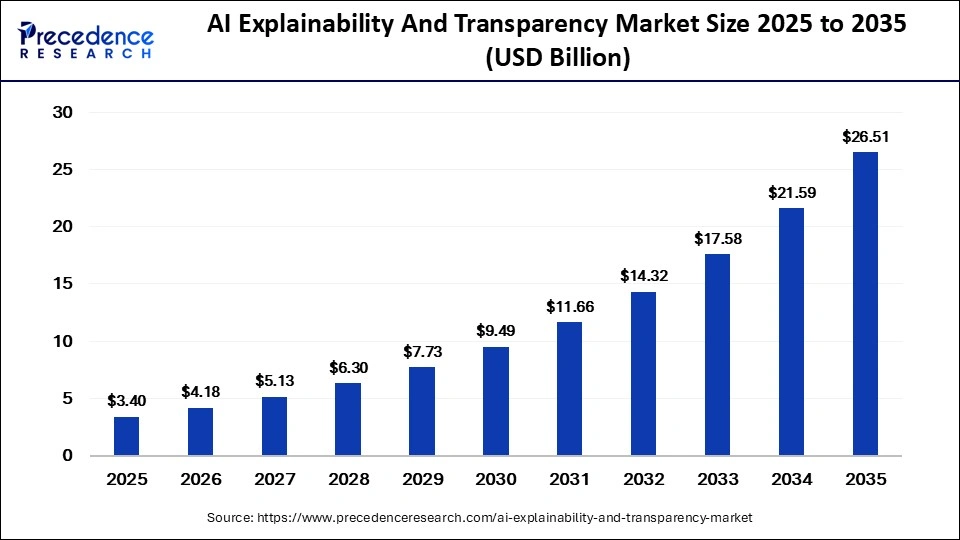

The global AI explainability and transparency market is projected to reach USD 26.51 billion by 2035, driven by regulatory compliance, ethical AI adoption, bias detection tools, and growing enterprise demand for trustworthy AI systems.

AI Explainability and Transparency Market Overview

The global AI explainability and transparency market is witnessing substantial growth as organizations increasingly prioritize ethical, accountable, and interpretable artificial intelligence systems. According to Precedence Research, the market size was valued at USD 3.40 billion in 2025 and is projected to grow from USD 4.18 billion in 2026 to approximately USD 26.51 billion by 2035, registering a robust CAGR of 22.80% during the forecast period.

AI explainability and transparency solutions are becoming essential as enterprises deploy artificial intelligence across high-stakes sectors such as banking, healthcare, government, retail, and autonomous systems. Organizations are increasingly seeking tools that help interpret AI decisions, detect bias, ensure fairness, and improve accountability in automated systems.

The rapid rise of generative AI, predictive analytics, and autonomous decision-making technologies has intensified concerns surrounding black-box AI systems. As a result, enterprises and regulators worldwide are emphasizing explainable AI (XAI) frameworks, model auditing tools, and governance solutions to build trust and comply with evolving regulations.

Read Also: Low Code AI Platform Market

What is AI Explainability and Transparency?

AI explainability refers to the ability of artificial intelligence systems to clearly explain how decisions, predictions, or recommendations are generated. Transparency focuses on making AI models understandable, traceable, and accountable for users, regulators, and stakeholders.

These technologies help organizations:

- Understand AI decision pathways

- Detect algorithmic bias

- Improve model accountability

- Ensure regulatory compliance

- Enhance trust in automated systems

- Monitor AI behavior in real time

Explainable AI frameworks are increasingly used in industries where decisions significantly impact individuals, including finance, healthcare, insurance, hiring, and cybersecurity.

Key Market Drivers

Rising Demand for Ethical and Trustworthy AI

One of the major drivers fueling market growth is the increasing need for trustworthy and ethical AI systems. As enterprises adopt AI-driven automation and predictive decision-making, concerns regarding fairness, accountability, and transparency are growing rapidly.

Organizations are under pressure to explain how AI systems make decisions, particularly in sensitive applications such as lending approvals, medical diagnoses, fraud detection, and recruitment. Explainability tools help enterprises reduce operational risks while strengthening consumer and regulatory trust.

Recent studies also highlight that transparency has become foundational to enterprise AI adoption, especially in mission-critical environments where autonomous AI agents increasingly influence operations.

Increasing Regulatory Pressure

Governments and regulatory agencies worldwide are implementing stricter AI governance frameworks that require organizations to ensure explainability and accountability in automated decision-making systems.

The European Union AI Act, GDPR regulations, and emerging AI governance laws in countries such as the United States and China are accelerating demand for explainability solutions. Regulatory requirements around transparency, traceability, and bias mitigation are encouraging enterprises to invest heavily in responsible AI infrastructure.

According to industry experts, regulatory scrutiny surrounding AI fairness and compliance is becoming one of the strongest catalysts for explainable AI adoption globally.

Expansion of Generative AI and Autonomous Systems

The rapid adoption of generative AI and autonomous systems is significantly boosting demand for explainability tools. Large Language Models (LLMs), AI copilots, and autonomous agents are increasingly integrated into enterprise operations, creating new challenges around transparency and accountability.

Organizations are implementing explainability layers such as source attribution, confidence scoring, and traceability mechanisms to reduce hallucinations and improve AI reliability.

As AI systems become more autonomous, enterprises require deeper visibility into how decisions are generated to ensure operational safety and regulatory compliance.

Rising Adoption Across BFSI and Healthcare

The BFSI and healthcare sectors are among the largest adopters of explainable AI technologies due to their strict regulatory requirements and high operational risks.

The BFSI segment accounted for approximately 30% of the market share in 2025. Financial institutions are increasingly using explainability tools to improve fraud detection transparency, risk assessment, and credit scoring accountability.

Meanwhile, healthcare organizations are adopting explainable AI systems to support transparent diagnostics, patient care decisions, and treatment recommendations.

Market Restraints

Complexity of Interpreting Advanced AI Models

One of the major challenges in the AI explainability and transparency market is the complexity of interpreting highly advanced AI models such as deep neural networks and large-scale machine learning systems.

Many sophisticated AI systems operate as “black boxes,” making it difficult for developers and stakeholders to fully understand how decisions are generated. Balancing explainability with model performance and accuracy remains a significant technical challenge.

Lack of Standardized Frameworks

The absence of universally accepted standards for AI explainability and transparency creates inconsistencies across industries and regulatory environments.

Experts note that concepts such as transparency, interpretability, explainability, and traceability are often inconsistently defined, complicating implementation and compliance efforts.

This lack of standardization can slow enterprise adoption and increase operational uncertainty for organizations deploying explainable AI systems.

Integration Challenges with Existing AI Infrastructure

Many enterprises face difficulties integrating explainability tools with legacy AI systems and complex enterprise infrastructures.

Organizations often require customized solutions capable of supporting multiple AI models, governance policies, and compliance frameworks simultaneously, increasing deployment complexity and implementation costs.

Emerging Market Opportunities

Growth of Responsible AI Governance Ecosystems

The emergence of enterprise-wide responsible AI governance ecosystems is creating substantial opportunities for explainability solution providers.

Organizations are increasingly establishing dedicated responsible AI teams and governance frameworks focused on fairness, accountability, and transparency. Explainability tools are becoming integral components of enterprise AI lifecycle management systems.

Companies are also investing in automated governance platforms capable of monitoring training data, model updates, and AI decision pathways continuously.

Increasing Demand for Bias Detection and Fairness Tools

Bias detection and fairness tools represent one of the fastest-growing segments in the market.

The bias detection and fairness tools segment held approximately 22% of the market share in 2025 and is projected to grow at a CAGR of 25.5% through 2035.

Growing concerns about discrimination in AI-driven hiring, lending, insurance, and healthcare applications are accelerating adoption of fairness monitoring technologies globally.

Expansion of Explainable AI in Cybersecurity

AI explainability tools are increasingly being adopted in cybersecurity applications to improve threat intelligence analysis, anomaly detection, and automated incident response.

Transparent AI systems help analysts validate alerts, reduce false positives, and improve operational trust in automated security environments. Financial institutions and enterprises are particularly prioritizing explainability to strengthen cybersecurity governance and regulatory compliance.

Segment Analysis

Software Segment Dominates the Market

By component, the software segment dominated the market with a 70% share in 2025 due to rising demand for AI governance platforms, interpretability tools, and automated monitoring systems.

Organizations are increasingly implementing software solutions capable of delivering real-time model interpretability, fairness analysis, and compliance monitoring across AI operations.

The services segment is expected to grow steadily as enterprises seek consulting, implementation, and managed services for responsible AI deployment.

Cloud-Based Deployment Leads the Market

Cloud-based deployment accounted for approximately 75% of the market share in 2025 due to scalability, flexibility, and lower infrastructure costs.

Cloud-native explainability platforms enable organizations to integrate transparency tools with AI and analytics systems more efficiently while supporting real-time governance and centralized monitoring.

Model Interpretability Tools Hold Largest Technology Share

The model interpretability tools segment led the market with a 28% share in 2025. These tools help users understand feature importance, decision logic, and model behavior.

Model monitoring and auditing solutions are also gaining traction as enterprises seek continuous oversight of AI system performance and compliance.

Regional Analysis

North America Dominates the Global Market

North America held the largest market share of approximately 44% in 2025 due to strong AI investments, advanced digital infrastructure, and the presence of major technology companies.

The United States remains a key contributor, driven by rising adoption of explainable AI in financial services, healthcare, and enterprise automation. The U.S. market is expected to reach nearly USD 8.91 billion by 2035.

Asia Pacific Expected to Witness Fastest Growth

Asia Pacific is projected to grow at the fastest CAGR of 26.5% during the forecast period. Rapid digital transformation, growing AI adoption, and government initiatives promoting ethical AI are driving regional expansion.

Countries such as China and India are increasingly investing in AI transparency frameworks across finance, healthcare, and public administration sectors.

Europe Maintains Strong Market Position

Europe continues to play a major role in the market due to strict regulatory requirements surrounding AI transparency and ethical governance.

The EU AI Act and GDPR regulations are significantly influencing explainability adoption across banking, healthcare, and public-sector organizations.

Competitive Landscape

The AI explainability and transparency market is highly competitive, with technology companies, AI startups, and consulting firms investing heavily in responsible AI solutions.

Key Companies Operating in the Market

Major players include:

- IBM

- Microsoft

- Google Cloud

- Amazon Web Services

- Oracle

- Salesforce

- Accenture

- Deloitte

- Infosys

- TCS

Get a Sample Copy: https://www.precedenceresearch.com/sample/8405

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com