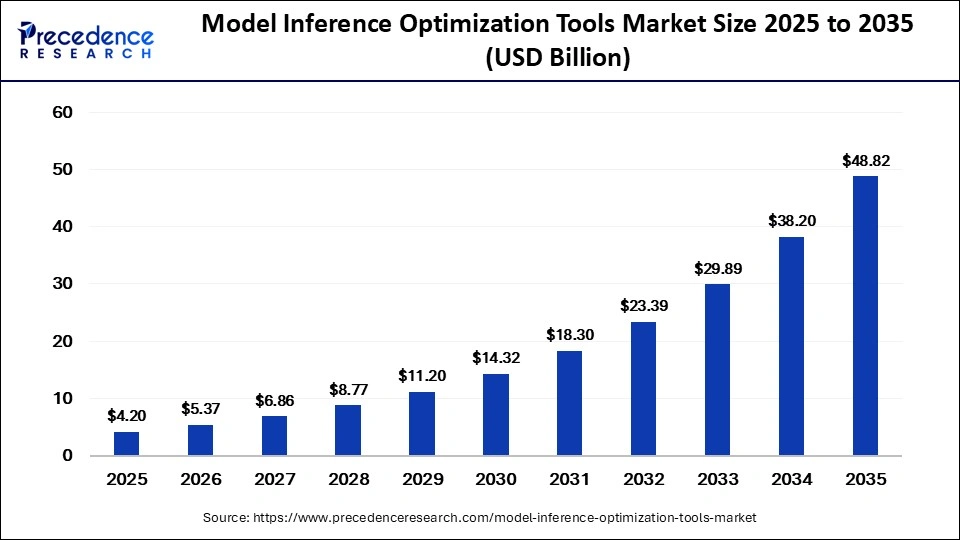

The global model inference optimization tools market is projected to reach USD 48.82 billion by 2035, driven by generative AI growth, edge computing adoption, low-latency AI deployment, and hardware-aware optimization technologies.

Introduction

Artificial intelligence models are becoming larger, smarter, and more computationally demanding than ever before. From generative AI and large language models (LLMs) to autonomous systems and real-time analytics, businesses now require AI systems that can deliver fast and efficient performance at scale.

However, deploying AI models into production environments introduces major challenges:

- High inference latency

- Rising compute costs

- Increased energy consumption

- Hardware compatibility limitations

This has created strong demand for model inference optimization tools—specialized technologies designed to improve the speed, efficiency, and scalability of AI model deployment.

According to recent industry insights, the global model inference optimization tools market was valued at USD 4.20 billion in 2025 and is expected to reach approximately USD 48.82 billion by 2035, growing at an impressive CAGR of 27.80% from 2026 to 2035.

Read Also: Data Center Cable Market

What Are Model Inference Optimization Tools?

Model inference optimization tools are software and hardware solutions designed to improve the performance of AI models during inference—the stage where trained models generate predictions or outputs using new data.

These tools help organizations:

- Reduce latency

- Improve throughput

- Lower infrastructure costs

- Optimize GPU and accelerator utilization

- Deploy AI models efficiently across cloud, edge, and hybrid environments

Inference optimization technologies are becoming essential for deploying:

- Large language models (LLMs)

- Computer vision systems

- Recommendation engines

- Autonomous AI systems

- Real-time analytics platforms

Why the Market Is Growing Rapidly

Explosion of Generative AI and Large Models

The rapid growth of generative AI applications is one of the strongest drivers of the market.

Modern AI systems such as:

- Chatbots

- AI copilots

- Multimodal AI systems

- Real-time recommendation engines

require massive computational resources during inference. Organizations are increasingly adopting optimization tools to reduce the operational cost of running these large-scale AI workloads.

Rising Demand for Low-Latency AI Applications

Industries increasingly rely on real-time AI systems where even milliseconds matter.

Applications such as:

- Autonomous vehicles

- Fraud detection

- Industrial automation

- Smart surveillance

- Real-time customer support

require ultra-fast inference performance.

Optimization tools help ensure rapid AI response times while minimizing computational overhead.

Growth of Edge AI and IoT

Edge AI is rapidly expanding across:

- Smartphones

- IoT devices

- Robotics

- Industrial equipment

- Smart cities

These devices often operate in resource-constrained environments with limited compute power and battery capacity.

As a result, organizations are increasingly adopting lightweight optimization techniques such as:

- Quantization

- Pruning

- Knowledge distillation

- Hardware-aware inference optimization

Key Technologies Driving the Market

Quantization Leads the Market

Quantization accounted for approximately 30% market share in 2025, making it the dominant optimization technique.

This technique reduces model precision to smaller numerical formats such as:

- INT8

- FP16

- INT4

Benefits include:

- Lower memory usage

- Faster inference speed

- Reduced power consumption

- Improved scalability

Quantization has become critical for deploying large AI models efficiently across edge and cloud infrastructure.

Pruning and Sparsity Optimization

Pruning technologies remove redundant parameters from neural networks to reduce model complexity while maintaining performance.

These techniques help:

- Accelerate inference

- Reduce compute requirements

- Improve deployment efficiency

Pruning is increasingly combined with other compression techniques for maximum optimization efficiency.

Knowledge Distillation Expanding Adoption

Knowledge distillation allows smaller “student” models to learn from larger “teacher” models.

This enables organizations to:

- Maintain high accuracy

- Deploy lightweight AI systems

- Optimize inference in constrained environments

This approach is becoming increasingly important for edge AI applications.

Hardware-Aware Optimization Becomes Critical

One of the biggest industry shifts is the rise of hardware-aware optimization.

Modern optimization tools are increasingly designed specifically for:

- GPUs

- TPUs

- NPUs

- AI accelerators

- FPGA systems

By tailoring inference execution to specific hardware architectures, organizations can significantly improve:

- Performance

- Throughput

- Cost efficiency

Key Market Segment Insights

By Tool Type

Inference Acceleration Engines Lead the Market

The inference acceleration engines segment held approximately 28% market share in 2025.

These tools include:

- Runtime engines

- Tensor optimization systems

- AI compilers

They help organizations execute pre-trained AI models with:

- Low latency

- High throughput

- Better hardware efficiency

Edge AI Optimization Tools Growing Fastest

The edge AI optimization tools segment is expected to grow at the fastest CAGR during the forecast period.

This growth is driven by:

- Expansion of IoT ecosystems

- Rise of edge computing

- Real-time AI applications

- Demand for on-device intelligence

By Deployment Environment

Cloud-Based Optimization Dominates

Cloud-based optimization accounted for approximately 55% market share in 2025.

Cloud deployment offers:

- Scalability

- Centralized AI management

- Faster deployment cycles

- Cost-effective infrastructure scaling

Cloud-native AI optimization platforms are becoming standard for enterprise AI deployment.

Edge and On-Device Optimization Growing Rapidly

The edge/on-device segment is projected to grow at the fastest rate due to increasing demand for:

- Offline AI processing

- Reduced latency

- Privacy-preserving AI

- Autonomous systems

AI Workloads Reshaping Optimization Needs

The rapid evolution of AI workloads is driving demand for specialized optimization platforms.

Modern workloads include:

- Large language models (LLMs)

- Multimodal AI systems

- Vision transformers

- Autonomous AI agents

These advanced models require optimized inference pipelines capable of balancing:

- Accuracy

- Speed

- Power efficiency

- Cost-effectiveness

Industry Applications

Real-Time Analytics Leads the Market

Real-time analytics accounted for approximately 28% market share in 2025.

Optimization tools support:

- Live fraud detection

- Dynamic pricing systems

- Predictive maintenance

- Instant customer personalization

Autonomous Systems Expanding Rapidly

AI optimization is increasingly critical for:

- Autonomous vehicles

- Robotics

- Drones

- Smart manufacturing systems

These applications require ultra-low-latency inference for real-time decision-making.

Regional Insights

North America Leads the Market

North America accounted for approximately 42% market share in 2025.

The region benefits from:

- Advanced AI infrastructure

- Strong cloud computing ecosystems

- Major AI hardware manufacturers

- High enterprise AI adoption

The United States remains a global leader in AI optimization innovation.

Asia-Pacific Emerging as Fastest Growing Region

Asia-Pacific is projected to witness the fastest growth during the forecast period.

Growth is driven by:

- Expanding AI ecosystems

- Rapid cloud adoption

- Government AI initiatives

- Increasing semiconductor innovation

Countries such as China, India, Japan, and South Korea are investing heavily in AI infrastructure and edge computing.

Key Industry Trends

Several major trends are shaping the future of the market:

- Hardware-aware optimization platforms

- Automated inference tuning systems

- Specialized LLM optimization toolchains

- AI infrastructure sustainability initiatives

- Integration of optimization into MLOps workflows

- Increasing focus on energy-efficient AI deployment

The industry is rapidly moving toward fully automated AI optimization ecosystems.

Competitive Landscape

Major companies operating in the market include:

- NVIDIA

- Amazon Web Services (AWS)

- Google Cloud

- Microsoft

- Intel Corporation

- AMD

- Qualcomm Technologies

- Hugging Face

- Cerebras Systems

- Groq

These companies are investing heavily in:

- AI accelerators

- Inference runtimes

- Edge AI optimization

- Low-bit quantization systems

- Hardware-specific optimization frameworks

Challenges Facing the Market

Despite strong growth potential, the industry faces several obstacles:

- Hardware fragmentation

- High infrastructure costs

- Model accuracy trade-offs

- Complexity of optimization workflows

- Shortage of specialized AI optimization expertise

Balancing inference speed with model accuracy remains one of the industry’s biggest technical challenges.

Future Outlook

The future of the model inference optimization tools market will be shaped by:

- Autonomous AI systems

- Agentic AI workflows

- Edge-native AI applications

- Real-time multimodal AI

- Sustainable AI infrastructure

- Fully automated inference optimization pipelines

As AI deployment scales globally, optimization tools will become foundational infrastructure for enterprise AI operations.

Conclusion

The model inference optimization tools market is rapidly becoming one of the most critical segments of the AI ecosystem.

As AI models continue to grow in size and complexity, organizations must find ways to deliver faster, cheaper, and more efficient inference performance. Optimization technologies are emerging as the bridge between cutting-edge AI innovation and scalable real-world deployment.

The future of AI will not depend solely on building larger models—it will depend on deploying them intelligently, efficiently, and sustainably.

Get Sample link: https://www.precedenceresearch.com/sample/8383

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com